There is a particular moment, familiar to anyone who has spent time inside a large professional organization, when the promise of a new technology begins to outpace the organization’s ability to understand it. The language becomes inflated—transformational, revolutionary, inevitable—while the underlying systems, those quiet and often neglected structures upon which all work depends, remain stubbornly unchanged.

It was in such a moment that I found myself in conversation with two senior operators at a global law firm—let us call them Sarah and Grant—who had been tasked, in one way or another, with making sense of artificial intelligence. They were not evangelists, nor were they skeptics in the performative sense. They occupied that more interesting middle ground: practitioners confronted with a problem that refused to conform to its own narrative.

The problem, as they described it, was not the AI itself.

Indeed, the systems worked—at least in the narrow, demonstrative sense in which such systems are often said to “work.” They could summarize documents, extract clauses, generate drafts that appeared, at first glance, to be competent. In controlled environments, the results were impressive. One might even say seductive.

But outside those controlled conditions—within the actual operating environment of the firm—the outputs became erratic. Not wrong in any obvious or catastrophic way, but subtly unreliable. A clause would be correctly identified but contextually misplaced. A summary would capture the surface of a document while missing its operative nuance. A generated draft would sound authoritative while quietly introducing assumptions no one had intended.

It was, Sarah observed, “as though the system understood the words, but not the world they belonged to.”

This remark, delivered almost in passing, seemed to crystallize the issue. For what is the “world” of a law firm, if not its accumulated knowledge—its precedents, its classifications, its internal distinctions between what matters and what does not? And what is that knowledge, in practice, but a system of organization: taxonomies, metadata, ownership structures, and the quiet discipline of deciding what is current, what is obsolete, and what is authoritative?

In other words, the firm’s knowledge management.

For years, this function had been treated as a kind of administrative necessity—important, certainly, but rarely strategic. It was the province of librarians, technologists, and the occasional forward-thinking partner; it was funded just enough to avoid failure, but seldom enough to produce excellence. Its successes were invisible, its failures diffuse.

AI, however, had changed the equation—not by improving these systems, but by exposing them.

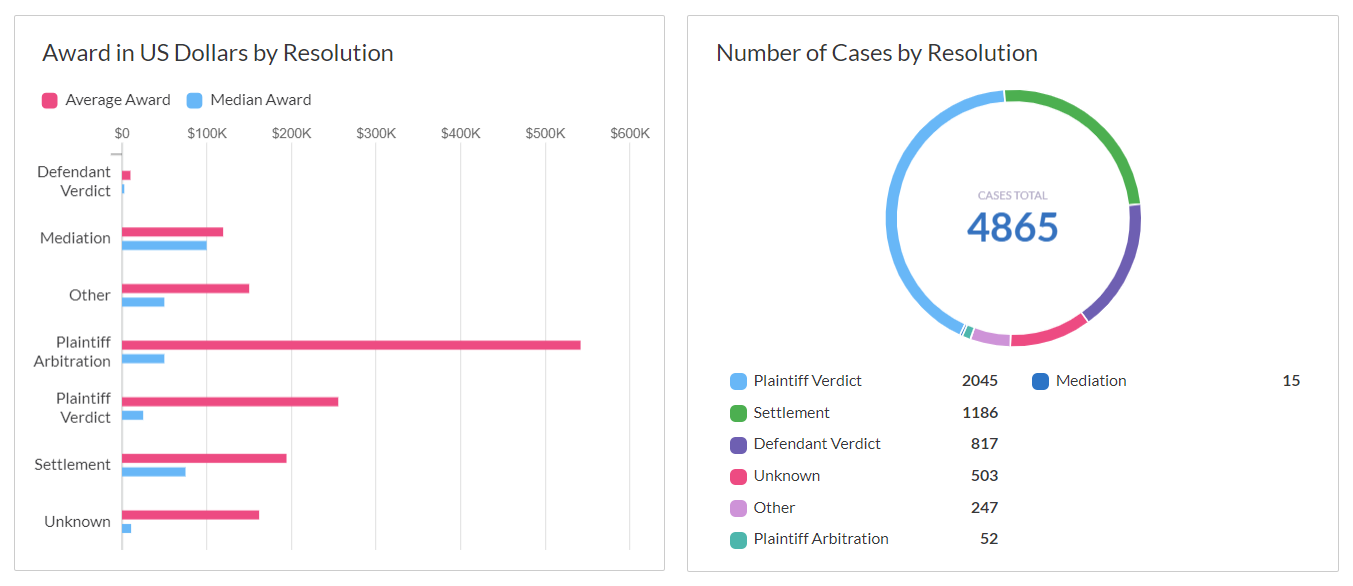

Grant described an early internal deployment in which the firm had attempted to apply an AI model across a broad corpus of transactional documents. The expectation, shaped by vendor demonstrations and industry rhetoric, was that the system would surface insights at scale: patterns across deals, deviations from standard language, opportunities for efficiency.

What emerged instead was something closer to confusion.

Documents that had been mislabeled years earlier were now treated as exemplars. Outdated precedents, never properly archived, were resurfacing as though they were current. Variations in terminology—minor to the human eye—were interpreted by the system as meaningful distinctions, while genuinely important differences were sometimes collapsed into a single category.

The AI, in short, was doing precisely what it had been designed to do. It was identifying patterns. It was generalizing from data. It was producing outputs consistent with its inputs.

The difficulty was that the inputs themselves lacked coherence.

“If you think of it,” Grant said, “we weren’t giving it knowledge. We were giving it a pile.”

This distinction—between a pile and a system—may seem trivial, but it is, in fact, the fulcrum upon which the entire enterprise turns. A pile is an accumulation. A system is an integration. The former can be large, even impressive in its volume; the latter must be structured, governed, and, above all, intelligible.

What Sarah and Grant had begun to realize was that their difficulty with AI was not, fundamentally, a technological problem. It was an epistemological one.

The firm did not lack information. It lacked, in a precise sense, knowledge—understood not as mere content, but as content organized according to identity, context, and purpose. Documents existed, but their relationships to one another were often implicit rather than explicit. Categories existed, but they had grown organically, accumulating exceptions and inconsistencies over time. Ownership existed, but it was diffuse, distributed across practice groups and individuals with varying incentives to maintain it.

Into this environment, the firm had introduced a system whose primary function was to operate on structure.

It was, perhaps, inevitable that the result would be instability.

The response, once articulated, was both obvious and unexpectedly demanding. Rather than attempting to refine the AI in isolation—to tweak prompts, adjust parameters, or search for more sophisticated models—the firm turned its attention to the underlying knowledge environment.

What followed was not a technological project, at least not in the conventional sense, but something closer to an act of institutional introspection.

Content was audited at scale. Documents were evaluated not merely for relevance, but for authority. Entire categories of material—legacy precedents, duplicative records, artifacts of long-forgotten matters—were archived or removed. Taxonomies, once treated as static, were redesigned to reflect the actual conceptual structure of the firm’s work: not just what documents were, but how and why they were used.

Perhaps most significantly, ownership was clarified. Knowledge was no longer allowed to exist in a state of benign neglect. It belonged to someone, and that someone was responsible for its accuracy, its currency, and its place within the broader system.

This work, by all accounts, was neither glamorous nor straightforward. It required negotiation across practice groups, each with its own language and priorities. It required, at times, the unsettling recognition that long-standing materials—documents that had been relied upon for years—were no longer fit for purpose. It required a kind of intellectual discipline that is rarely rewarded in the short term.

And yet, as the environment became more coherent, something else began to change.

Search improved—not dramatically, but perceptibly. Results became more predictable, more aligned with user intent. Lawyers, who had long developed informal workarounds for the system’s deficiencies, began to trust it, if only tentatively.

When AI was reintroduced into this environment, the difference was not explosive, but it was unmistakable.

Outputs became more consistent. Variations, when they appeared, were more often traceable to identifiable differences in input rather than inexplicable artifacts of the system. The need for constant verification diminished—not eliminated, but reduced to a level that made the technology practically useful rather than merely interesting.

“It wasn’t that the AI got smarter,” Sarah reflected. “It’s that it had something to stand on.”

This phrase—something to stand on—captures, with a certain elegance, the relationship between AI and knowledge. The former is often imagined as a kind of independent intelligence, capable of transcending the limitations of its inputs. In practice, it is better understood as a force multiplier: it amplifies whatever structure it is given.

In a well-ordered environment, it produces leverage—speed, scale, and the ability to surface patterns that would otherwise remain latent. In a disordered one, it produces noise—plausible but unreliable outputs that erode trust and, in time, invite rejection.

The implication, though rarely stated so plainly, is that the true constraint on AI adoption is not access to models, nor even to data in the abstract. It is the quality of the system that transforms data into knowledge.

This is a less comfortable conclusion than the industry’s prevailing narrative would suggest. It offers no immediate transformation, no simple procurement decision that will resolve the problem. Instead, it points back to the organization itself—to its habits, its structures, and its willingness to impose order where, for years, disorder has been tolerated.

In intelligence work, there is a well-worn distinction between signal and noise. The former is valuable precisely because it can be distinguished from the latter; without that distinction, information loses its utility. The task of the analyst is not merely to collect data, but to structure it in such a way that signal can emerge.

What Sarah and Grant had encountered, in their own domain, was a version of this problem. They had not been lacking in data. They had been lacking in the conditions under which data becomes meaningful.

AI, for all its sophistication, had simply made that fact impossible to ignore.

And so, the question facing firms is not, as it is often framed, whether they will adopt artificial intelligence. In one form or another, they will. The more interesting question is whether they will do the quieter, more exacting work required to make that adoption worthwhile.

For without that work, the system—no matter how advanced—will remain what it was at the outset: a mirror, reflecting back the organization’s own unresolved disorder, only now at scale.